NAG Library Function Document

nag_opt_nlp_solve (e04wdc)

Note: this function uses optional arguments to define choices in the problem specification and in the details of the algorithm. If you wish to use default

settings for all of the optional arguments, you need only read Sections 1 to 10 of this document. If, however, you wish to reset some or all of the settings please refer to Section 11 for a detailed description of the algorithm, to Section 12 for a detailed description of the specification of the optional arguments and to Section 13 for a detailed description of the monitoring information produced by the function.

1 Purpose

nag_opt_nlp_solve (e04wdc) is designed to minimize an arbitrary smooth function subject to constraints (which may include simple bounds on the variables, linear constraints and smooth nonlinear constraints) using a sequential quadratic programming (SQP) method. As many first derivatives as possible should be supplied by you; any unspecified derivatives are approximated by finite differences. It is not intended for large sparse problems.

nag_opt_nlp_solve (e04wdc) may also be used for unconstrained, bound-constrained and linearly constrained optimization.

nag_opt_nlp_solve (e04wdc) uses forward

communication for evaluating the objective function, the nonlinear constraint functions, and any of their derivatives.

The initialization function

nag_opt_nlp_init (e04wcc) must have been called before to calling nag_opt_nlp_solve (e04wdc).

2 Specification

| #include <nag.h> |

| #include <nage04.h> |

| void |

nag_opt_nlp_solve (Integer n,

Integer nclin,

Integer ncnln,

Integer tda,

Integer tdcj,

Integer tdh,

const double a[],

const double bl[],

const double bu[],

| void |

(*confun)(Integer *mode,

Integer ncnln,

Integer n,

Integer tdcj,

const Integer needc[],

const double x[],

double ccon[],

double cjac[],

Integer nstate,

Nag_Comm *comm),

|

|

Integer *majits,

Integer istate[],

double ccon[],

double cjac[],

double clamda[],

double *objf,

double grad[],

double h[],

double x[],

Nag_E04State *state,

Nag_Comm *comm,

NagError *fail) |

|

| #include <nag.h> |

| #include <nage04.h> |

| void |

nag_opt_nlp_init (Nag_E04State *state,

NagError *fail) |

|

3 Description

nag_opt_nlp_solve (e04wdc) is designed to solve nonlinear programming problems – the minimization of a smooth nonlinear function subject to a set of constraints on the variables. nag_opt_nlp_solve (e04wdc) is suitable for small dense problems. The problem is assumed to be stated in the following form:

where

(the

objective function) is a nonlinear scalar function,

is an

by

constant matrix, and

is an

-vector of nonlinear constraint functions. (The matrix

and the vector

may be empty.) The objective function and the constraint functions are assumed to be smooth, here meaning at least twice-continuously differentiable. (The method of nag_opt_nlp_solve (e04wdc) will usually solve

(1) if there are only isolated discontinuities away from the solution.) We also write

for the vector of combined functions:

Note that although the bounds on the variables could be included in the definition of the linear constraints, we prefer to distinguish between them for reasons of computational efficiency. For the same reason, the linear constraints should not be included in the definition of the nonlinear constraints. Upper and lower bounds are specified for all the variables and for all the constraints. An equality constraint on can be specified by setting . If certain bounds are not present, the associated elements of or can be set to special values that will be treated as or . (See the description of the optional argument .)

A typical invocation of nag_opt_nlp_solve (e04wdc) is:

nag_opt_nlp_init(&state, ...);

nag_opt_nlp_option_set_file(ispecs, &state, ...);

nag_opt_nlp_solve(n, nclin, ncnln, ...);

where

nag_opt_nlp_option_set_file (e04wec) reads

a file of optional definitions.

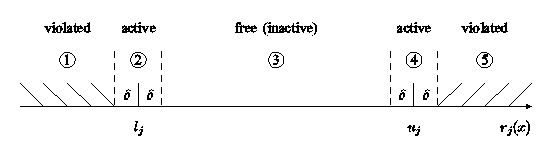

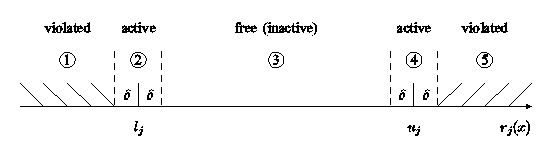

Figure 1 illustrates the feasible region for the

th pair of constraints

. The quantity of

is the

, which can be set by you (see

Section 12). The constraints

are considered ‘satisfied’ if

lies in Regions 2, 3 or 4, and ‘inactive’ if

lies in Region 3. The constraint

is considered ‘active’ in Region 2, and ‘violated’ in Region 1. Similarly,

is active in Region 4, and violated in Region 5. For equality constraints (

), Regions 2 and 4 are the same and Region 3 is empty.

Figure 1:

Illustration of the constraints

If there are no nonlinear constraints in

(1) and

is linear or quadratic, then it will generally be more efficient to use one of

nag_opt_lp (e04mfc),

nag_opt_lin_lsq (e04ncc) or

nag_opt_qp (e04nfc). If the problem is large and sparse and does have nonlinear constraints, then

nag_opt_sparse_nlp_solve (e04vhc) should be used, since nag_opt_nlp_solve (e04wdc) treats all matrices as dense.

You must supply an initial estimate of the solution to

(1), together with functions that define

and

with as many first partial derivatives as possible; unspecified derivatives are approximated by finite differences; see

Section 12.1 for a discussion of the optional argument

.

The objective function is defined by

objfun, and the nonlinear constraints are defined by

confun. Note that if there

are any nonlinear constraints then the

first call to

confun will precede the

first call to

objfun.

For maximum reliability, it is preferable for you to provide all partial derivatives (see Chapter 8 of

Gill et al. (1981), for a detailed discussion). If all gradients cannot be provided, it is similarly advisable to provide as many as possible. While developing

objfun and

confun, the optional argument

should be used to check the calculation of any known gradients.

The method used by nag_opt_nlp_solve (e04wdc) is based on NPOPT, which is part of the SNOPT package described in

Gill et al. (2005b), and the algorithm it uses is described in detail in

Section 11.

4 References

Eldersveld S K (1991) Large-scale sequential quadratic programming algorithms PhD Thesis Department of Operations Research, Stanford University, Stanford

Fourer R (1982) Solving staircase linear programs by the simplex method Math. Programming 23 274–313

Gill P E, Murray W and Saunders M A (2002) SNOPT: An SQP Algorithm for Large-scale Constrained Optimization 12 979–1006 SIAM J. Optim.

Gill P E, Murray W and Saunders M A (2005a) Users' guide for SQOPT 7: a Fortran package for large-scale linear and quadratic programming

Report NA 05-1 Department of Mathematics, University of California, San Diego

http://www.ccom.ucsd.edu/~peg/papers/sqdoc7.pdfGill P E, Murray W and Saunders M A (2005b) Users' guide for SNOPT 7.1: a Fortran package for large-scale linear nonlinear programming

Report NA 05-2 Department of Mathematics, University of California, San Diego

http://www.ccom.ucsd.edu/~peg/papers/sndoc7.pdfGill P E, Murray W, Saunders M A and Wright M H (1986) Users' guide for NPSOL (Version 4.0): a Fortran package for nonlinear programming Report SOL 86-2 Department of Operations Research, Stanford University

Gill P E, Murray W, Saunders M A and Wright M H (1992) Some theoretical properties of an augmented Lagrangian merit function Advances in Optimization and Parallel Computing (ed P M Pardalos) 101–128 North Holland

Gill P E, Murray W and Wright M H (1981) Practical Optimization Academic Press

Hock W and Schittkowski K (1981) Test Examples for Nonlinear Programming Codes. Lecture Notes in Economics and Mathematical Systems 187 Springer–Verlag

5 Arguments

- 1:

n – IntegerInput

On entry: , the number of variables.

Constraint:

.

- 2:

nclin – IntegerInput

On entry: , the number of general linear constraints.

Constraint:

.

- 3:

ncnln – IntegerInput

On entry: , the number of nonlinear constraints.

Constraint:

.

- 4:

tda – IntegerInput

-

On entry: the stride separating matrix column elements in the array

a.

Constraints:

- if ,

;

- otherwise .

- 5:

tdcj – IntegerInput

-

On entry: the stride separating matrix column elements in the array

cjac.

Constraints:

- if ,

;

- otherwise .

- 6:

tdh – IntegerInput

-

On entry: the stride separating matrix column elements in the array

h.

Constraint:

unless the optional argument

is in effect. If

is in effect, array

h is not referenced

- 7:

a[] – const doubleInput

-

Note: the dimension,

dim, of the array

a

must be at least

.

The th element of the matrix is stored in .

On entry: the

th row of

a contains the

th row of the matrix

of general linear constraints in

(1). That is, the

th row contains the coefficients of the

th general linear constraint, for

.

If

, the array

a is not referenced.

- 8:

bl[] – const doubleInput

- 9:

bu[] – const doubleInput

On entry:

bl must contain the lower bounds and

bu the upper bounds for all the constraints, in the following order. The first

elements of each array must contain the bounds on the variables, the next

elements the bounds for the general linear constraints (if any) and the next

elements the bounds for the general nonlinear constraints (if any). To specify a nonexistent lower bound (i.e.,

), set

, and to specify a nonexistent upper bound (i.e.,

), set

; where

is the optional argument

. To specify the

th constraint as an

equality, set

, say, where

.

Constraints:

- , for ;

- if , .

- 10:

confun – function, supplied by the userExternal Function

confun must calculate the vector

of nonlinear constraint functions and (optionally) its Jacobian,

, for a specified

-vector

. If there are no nonlinear constraints (i.e.,

), nag_opt_nlp_solve (e04wdc) will never call

confun, so it may be specified as NULLFN. If there are nonlinear constraints, the first call to

confun will occur before the first call to

objfun.

If all constraint gradients (Jacobian elements) are known (i.e.,

or

), any

constant elements may be assigned to

cjac once only at the start of the optimization. An element of

cjac that is not subsequently assigned in

confun will retain its initial value throughout. Constant elements may be loaded in

cjac during the first call to

confun (signalled by the value of

). The ability to preload constants is useful when many Jacobian elements are identically zero, in which case

cjac may be initialized to zero and nonzero elements may be reset by

confun.

It must be emphasized that, if

, unassigned elements of

cjac are

not treated as constant; they are estimated by finite differences, at nontrivial expense.

The specification of

confun is:

- 1:

mode – Integer *Input/Output

On entry: is set by nag_opt_nlp_solve (e04wdc) to indicate which values must be assigned during each call of

confun. Only the following values need be assigned, for each value of

such that

:

- The components of ccon corresponding to positive values in needc must be set. Other components and the array cjac are ignored.

- The known components of the rows of cjac corresponding to positive values in needc must be set. Other rows of cjac and the array ccon will be ignored.

- Only the elements of ccon corresponding to positive values of needc need to be set (and similarly for the known components of the rows of cjac).

On exit: may be used to indicate that you are unable or unwilling to evaluate the constraint functions at the current

.

During the linesearch, the constraint functions are evaluated at points of the form after they have already been evaluated satisfactorily at . At any such , if you set , nag_opt_nlp_solve (e04wdc) will evaluate the functions at some point closer to (where they are more likely to be defined).

If for some reason you wish to terminate the current problem, set .

- 2:

ncnln – IntegerInput

On entry: , the number of nonlinear constraints.

- 3:

n – IntegerInput

On entry: , the number of variables.

- 4:

tdcj – IntegerInput

-

On entry: the stride used in the array

cjac.

- 5:

needc[ncnln] – const IntegerInput

On entry: the indices of the elements of

ccon and/or

cjac that must be evaluated by

confun. If

, the

th element of

ccon and/or the available elements of the

th row of

cjac (see argument

mode) must be evaluated at

.

- 6:

x[n] – const doubleInput

On entry: , the vector of variables at which the constraint functions and/or the available elements of the constraint Jacobian are to be evaluated.

- 7:

ccon[] – doubleOutput

On exit: if

and

or

,

must contain the value of the

th constraint at

. The remaining elements of

ccon, corresponding to the non-positive elements of

needc, are ignored.

- 8:

cjac[] – doubleInput/Output

On entry: the elements of

cjac are set to special values that enable nag_opt_nlp_solve (e04wdc) to detect whether they are reset by

confun.

On exit: if

and

or

, the

th row of

cjac must contain the available elements of the vector

given by

where

is the partial derivative of the

th constraint with respect to the

th variable, evaluated at the point

. See also the argument

nstate. The remaining rows of

cjac, corresponding to non-positive elements of

needc, are ignored.

If all elements of the constraint Jacobian are known (i.e.,

or

), any constant elements may be assigned to

cjac one time only at the start of the optimization. An element of

cjac that is not subsequently assigned in

confun will retain its initial value throughout. Constant elements may be loaded into

cjac during the first call to

confun (signalled by the value

). The ability to preload constants is useful when many Jacobian elements are identically zero, in which case

cjac may be initialized to zero and nonzero elements may be reset by

confun.

Note that constant nonzero elements do affect the values of the constraints. Thus, if

is set to a constant value, it need not be reset in subsequent calls to

confun, but the value

must nonetheless be added to

. For example, if

and

then the term

must be included in the definition of

.

It must be emphasized that, if

or

, unassigned elements of

cjac are not treated as constant; they are estimated by finite differences, at nontrivial expense. If you do not supply a value for the optional argument

, an interval for each element of

is computed automatically at the start of the optimization. The automatic procedure can usually identify constant elements of

cjac, which are then computed once only by finite differences.

- 9:

nstate – IntegerInput

On entry: if

then nag_opt_nlp_solve (e04wdc) is calling

confun for the first time. This argument setting allows you to save computation time if certain data must be read or calculated only once.

- 10:

comm – Nag_Comm *

Pointer to structure of type Nag_Comm; the following members are relevant to

confun.

- user – double *

- iuser – Integer *

- p – Pointer

The type Pointer will be

void *. Before calling nag_opt_nlp_solve (e04wdc) you may allocate memory and initialize these pointers with various quantities for use by

confun when called from nag_opt_nlp_solve (e04wdc) (see

Section 3.2.1.1 in the Essential Introduction).

confun should be tested separately before being used in conjunction with nag_opt_nlp_solve (e04wdc). See also the description of the optional argument

.

- 11:

objfun – function, supplied by the userExternal Function

objfun must calculate the objective function

and (optionally) its gradient

for a specified

-vector

.

The specification of

objfun is:

- 1:

mode – Integer *Input/Output

On entry: is set by nag_opt_nlp_solve (e04wdc) to indicate which values must be assigned during each call of

objfun. Only the following values need be assigned:

- objf.

- All available elements of grad.

- objf and all available elements of grad.

On exit: may be used to indicate that you are unable or unwilling to evaluate the objective function at the current

.

During the linesearch, the function is evaluated at points of the form after they have already been evaluated satisfactorily at . For any such , if you set , nag_opt_nlp_solve (e04wdc) will reduce and evaluate the functions again (closer to , where they are more likely to be defined).

If for some reason you wish to terminate the current problem, set .

- 2:

n – IntegerInput

On entry: , the number of variables.

- 3:

x[n] – const doubleInput

On entry: , the vector of variables at which the objective function and/or all available elements of its gradient are to be evaluated.

- 4:

objf – double *Output

On exit: if

or

,

objf must be set to the value of the objective function at

.

- 5:

grad[n] – doubleInput/Output

On entry: the elements of

grad are set to special values.

On exit: if

or

,

grad must return the available elements of the gradient evaluated at

, i.e.,

contains the partial derivative

.

- 6:

nstate – IntegerInput

On entry: if

then nag_opt_nlp_solve (e04wdc) is calling

objfun for the first time. This argument setting allows you to save computation time if certain data must be read or calculated only once.

- 7:

comm – Nag_Comm *

Pointer to structure of type Nag_Comm; the following members are relevant to

objfun.

- user – double *

- iuser – Integer *

- p – Pointer

The type Pointer will be

void *. Before calling nag_opt_nlp_solve (e04wdc) you may allocate memory and initialize these pointers with various quantities for use by

objfun when called from nag_opt_nlp_solve (e04wdc) (see

Section 3.2.1.1 in the Essential Introduction).

objfun should be tested separately before being used in conjunction with nag_opt_nlp_solve (e04wdc). See also the description of the optional argument

.

- 12:

majits – Integer *Output

On exit: the number of major iterations performed.

- 13:

istate[] – IntegerInput/Output

On entry: is an integer array that need not be initialized if nag_opt_nlp_solve (e04wdc) is called with the

option (the default).

If optional argument

has been chosen, every element of

istate must be set. If nag_opt_nlp_solve (e04wdc) has just been called on a problem with the same dimensions,

istate already contains valid values. Otherwise,

should indicate whether either of the constraints

or

is expected to be active at a solution of

(1).

The ordering of

istate is the same as for

bl,

bu and

, i.e., the first

n components of

istate refer to the upper and lower bounds on the variables, the next

nclin refer to the bounds on

, and the last

ncnln refer to the bounds on

. Possible values of

follow:

| Neither nor is expected to be active. |

| is expected to be active. |

| is expected to be active. |

| This may be used if . Normally an equality constraint is active at a solution. |

The values

,

or

all have the same effect when

. If necessary, nag_opt_nlp_solve (e04wdc) will override your specification of

istate, so that a poor choice will not cause the algorithm to fail.

On exit: describes the status of the constraints

. For the

th lower or upper bound,

, the possible values of

are as follows (see

Figure 1).

is the appropriate feasibility tolerance.

| (Region 1) The lower bound is violated by more than . |

| (Region 5) The upper bound is violated by more than . |

| (Region 3) Both bounds are satisfied by more than . |

| (Region 2) The lower bound is active (to within ). |

| (Region 4) The upper bound is active (to within ). |

| () The bounds are equal and the equality constraint is satisfied (to within ). |

These values of

istate are labelled in the printed solution according to

Table 1.

| Region | | | | | | |

| | | | | | |

| Printed solution | -- | LL | FR | UL | ++ | EQ |

Table 1Labels used in the printed solution for the regions in

Figure 1 - 14:

ccon[] – doubleInput/Output

On entry:

ccon need not be initialized if the (default) optional argument

is used.

For a

, and if

,

ccon contains values of the nonlinear constraint functions

, for

, calculated in a previous call to nag_opt_nlp_solve (e04wdc).

On exit: if

,

contains the value of the

th nonlinear constraint function

at the final iterate, for

.

If

, the array

ccon is not referenced.

- 15:

cjac[] – doubleInput/Output

-

Note: the dimension,

dim, of the array

cjac

must be at least

.

On entry: in general,

cjac need not be initialized before the call to nag_opt_nlp_solve (e04wdc). However, if

or

, any constant elements of

cjac may be initialized. Such elements need not be reassigned on subsequent calls to

confun.

On exit: if

,

cjac contains the Jacobian matrix of the nonlinear constraint functions at the final iterate, i.e.,

contains the partial derivative of the

th constraint function with respect to the

th variable, for

and

. (See the discussion of argument

cjac under

confun.)

If

, the array

cjac is not referenced.

- 16:

clamda[] – doubleInput/Output

On entry: need not be set if the (default) optional argument

is used.

If the optional argument

has been chosen,

must contain a multiplier estimate for each nonlinear constraint, with a sign that matches the status of the constraint specified by the

istate array, for

. The remaining elements need not be set. If the

th constraint is defined as ‘inactive’ by the initial value of the

istate array (i.e.,

),

should be zero; if the

th constraint is an inequality active at its lower bound (i.e.,

),

should be non-negative; if the

th constraint is an inequality active at its upper bound (i.e.,

),

should be non-positive. If necessary, the function will modify

clamda to match these rules.

On exit: the values of the QP multipliers from the last QP subproblem. should be non-negative if and non-positive if .

- 17:

objf – double *Output

On exit: the value of the objective function at the final iterate.

- 18:

grad[n] – doubleOutput

On exit: the gradient of the objective function (or its finite difference approximation) at the final iterate.

- 19:

h[] – doubleInput/Output

-

Note: the dimension,

dim, of the array

h

must be at least

.

On entry:

h need not be initialized if the (default) optional argument

is used, and will be set to the identity.

If the optional argument

has been chosen,

h provides the initial approximation of the Hessian of the Lagrangian, i.e.,

, where

and

is an estimate of the Lagrange multipliers.

h must be a positive definite matrix.

On exit: contains the Hessian of the Lagrangian at the final estimate .

- 20:

x[n] – doubleInput/Output

On entry: an initial estimate of the solution.

On exit: the final estimate of the solution.

- 21:

state – Nag_E04State *Communication Structure

state contains internal information required for functions in this suite. It must not be modified in any way.

- 22:

comm – Nag_Comm *Communication Structure

-

The NAG communication argument (see

Section 3.2.1.1 in the Essential Introduction).

- 23:

fail – NagError *Input/Output

-

The NAG error argument (see

Section 3.6 in the Essential Introduction).

nag_opt_nlp_solve (e04wdc) returns with

NE_NOERROR if the iterates have converged to a point

that satisfies the first-order Kuhn–Tucker (see

Section 13.2) conditions to the accuracy requested by the

, i.e., the projected gradient and active constraint residuals are negligible at

.

You should check whether the following four conditions are satisfied:

| (i) |

the final value of rgNorm (see Section 13.2) is significantly less than that at the starting point; |

| (ii) |

during the final major iterations, the values of Step and Minors (see Section 13.1) are both one; |

| (iii) |

the last few values of both rgNorm and SumInf (see Section 13.2) become small at a fast linear rate; and |

| (iv) |

condHz (see Section 13.1) is small. |

If all these conditions hold,

is almost certainly a local minimum of

(1).

One caution about ‘Optimal solutions’. Some of the variables or slacks may lie outside their bounds more than desired, especially if scaling was requested. Max Primal infeas in the Print file refers to the largest bound infeasibility and which variable is involved. If it is too large, consider restarting with a smaller (say times smaller) and perhaps .

Similarly,

Max Dual infeas in the Print file indicates which variable is most likely to be at a nonoptimal value. Broadly speaking, if

then the objective function would probably change in the

th significant digit if optimization could be continued. If

seems too large, consider restarting with a smaller

.

Finally, Nonlinear constraint violn in the Print file shows the maximum infeasibility for nonlinear rows. If it seems too large, consider restarting with a smaller .

6 Error Indicators and Warnings

- NE_ALLOC_FAIL

-

Dynamic memory allocation failed.

Internal error: memory allocation failed when attempting to allocate workspace sizes and .

- NE_ALLOC_INSUFFICIENT

-

Internal memory allocation was insufficient. Please contact

NAG.

- NE_BAD_PARAM

-

Basis file dimensions do not match this problem.

On entry, argument had an illegal value.

- NE_BASIS_FAILURE

-

An error has occurred in the basis package, perhaps indicating incorrect setup of arrays. Set the optional argument and examine the output carefully for further information.

- NE_DERIV_ERRORS

-

User-supplied function computes incorrect constraint derivatives.

User-supplied function computes incorrect objective derivatives.

- NE_E04WCC_NOT_INIT

-

Initialization function

nag_opt_nlp_init (e04wcc) has not been called.

- NE_INT

-

On entry, .

Constraint: .

On entry, .

Constraint: .

On entry, .

Constraint: .

On entry, .

Constraint: .

On entry, .

Constraint: .

On entry, .

Constraint: .

- NE_INT_2

-

On entry, and .

Constraint: .

On entry, and .

Constraint: .

On entry, and .

Constraint: .

On entry, and .

Constraint: .

- NE_INT_3

-

On entry, , and .

Constraint: if ,

;

otherwise .

On entry, , and .

Constraint: if ,

;

otherwise .

- NE_INTERNAL_ERROR

-

An internal error has occurred in this function. Check the function call and any array sizes. If the call is correct then please contact

NAG for assistance.

An unexpected error has occurred. Set the optional argument and examine the output carefully for further information.

- NE_NOT_REQUIRED_ACC

-

The requested accuracy could not be achieved.

- NE_NUM_DIFFICULTIES

-

Numerical difficulties have been encountered and no further progress can be made.

- NE_REAL_2

-

On entry, bounds

bl and

bu for

are equal and infinite.

and

.

On entry, bounds for are inconsistent. and .

- NE_UNBOUNDED

-

The problem appears to be unbounded. The constraint violation limit has been reached.

The problem appears to be unbounded. The objective function is unbounded.

- NE_USER_STOP

-

User-supplied constraint function requested termination.

User-supplied objective function requested termination.

- NE_USRFUN_UNDEFINED

-

Unable to proceed into undefined region of user-supplied function.

User-supplied function is undefined at the first feasible point.

User-supplied function is undefined at the initial point.

- NW_LIMIT_REACHED

-

Iteration limit reached.

Major iteration limit reached.

The value of the optional argument is too small.

- NW_NOT_FEASIBLE

-

The linear constraints appear to be infeasible.

The problem appears to be infeasible. Infeasibilites have been minimized.

The problem appears to be infeasible. Nonlinear infeasibilites have been minimized.

The problem appears to be infeasible. The linear equality constraints could not be satisfied.

7 Accuracy

If

NE_NOERROR on exit, then the vector returned in the array

x is an estimate of the solution to an accuracy of approximately

.

8 Parallelism and Performance

nag_opt_nlp_solve (e04wdc) is not threaded by NAG in any implementation.

nag_opt_nlp_solve (e04wdc) makes calls to BLAS and/or LAPACK routines, which may be threaded within the vendor library used by this implementation. Consult the documentation for the vendor library for further information.

Please consult the

Users' Note for your implementation for any additional implementation-specific information.

This section describes the final output produced by nag_opt_nlp_solve (e04wdc). Intermediate and other output are given in

Section 13.

9.1 The Final Output

If

,

the final output, including a listing of the status of every variable and constraint will be sent to the

file descriptors

associated with

. The following describes the output for each variable. A full stop (.) is printed for any numerical value that is zero.

| Variable |

gives the name (Variable) and index , for , of the variable. |

| State |

gives the state of the variable (FR if neither bound is in the working set, EQ if a fixed variable, LL if on its lower bound, UL if on its upper bound, TF if temporarily fixed at its current value). If Value lies outside the upper or lower bounds by more than the , State will be ++ or -- respectively.

(The latter situation can occur only when there is no feasible point for the bounds and linear constraints.)

A key is sometimes printed before State.

| A |

Alternative optimum possible. The variable is active at one of its bounds, but its Lagrange multiplier is essentially zero. This means that if the variable were allowed to start moving away from its bound then there would be no change to the objective function. The values of the other free variables might change, giving a genuine alternative solution. However, if there are any degenerate variables (labelled D), the actual change might prove to be zero, since one of them could encounter a bound immediately. In either case the values of the Lagrange multipliers might also change.

|

| D |

Degenerate. The variable is free, but it is equal to (or very close to) one of its bounds.

|

| I |

Infeasible. The variable is currently violating one of its bounds by more than the .

|

|

| Value |

is the value of the variable at the final iteration.

|

| Lower bound |

is the lower bound specified for the variable. None indicates that . |

| Upper bound |

is the upper bound specified for the variable. None indicates that . |

| Lagr multiplier |

is the Lagrange multiplier for the associated bound. This will be zero if State is FR unless and , in which case the entry will be blank. If is optimal, the multiplier should be non-negative if State is LL and non-positive if State is UL. |

| Slack |

is the difference between the variable Value and the nearer of its (finite) bounds and . A blank entry indicates that the associated variable is not bounded (i.e., and ).

|

The meaning of the output for linear and nonlinear constraints is the same as that given above for variables, with

and

replaced by

and

respectively, and with the following changes in the heading:

| Linear constrnt |

gives the name (lincon) and index , for , of the linear constraint. |

| Nonlin constrnt |

gives the name (nlncon) and index (), for , of the nonlinear constraint. |

Note that movement off a constraint (as opposed to a variable moving away from its bound) can be interpreted as allowing the entry in the Slack column to become positive.

Numerical values are output with a fixed number of digits; they are not guaranteed to be accurate to this precision.

10 Example

This example is based on Problem 71 in

Hock and Schittkowski (1981) and involves the minimization of the nonlinear function

subject to the bounds

to the general linear constraint

and to the nonlinear constraints

The initial point, which is infeasible, is

with

.

The optimal solution (to five figures) is

and

. One bound constraint and both nonlinear constraints are active at the solution.

10.1 Program Text

Program Text (e04wdce.c)

10.2 Program Data

Program Data (e04wdce.d)

10.3 Program Results

Program Results (e04wdce.r)

Note: the remainder of this document is intended for more advanced users. Section 11 contains a detailed description of the algorithm which may be needed in order to understand Sections 12 and 13. Section 12 describes the optional arguments which may be set by calls to nag_opt_nlp_option_set_string (e04wfc), nag_opt_nlp_option_set_integer (e04wgc) and/or nag_opt_nlp_option_set_double (e04whc). Section 13 describes the quantities which can be requested to monitor the course of the computation.

11 Algorithmic Details

Here we summarise the main features of the SQP algorithm used in nag_opt_nlp_solve (e04wdc) and introduce some terminology used in the description of the function and its arguments. The SQP algorithm is fully described in

Gill et al. (2002).

11.1 Constraints and Slack Variables

The upper and lower bounds on the

components of

are said to define the

general constraints of the problem. nag_opt_nlp_solve (e04wdc) converts the general constraints to equalities by introducing a set of

slack variables . For example, the linear constraint

is replaced by

together with the bounded slack

. The minimization problem

(1) can therefore be written in the equivalent form

The general constraints become the equalities and , where and are the linear and nonlinear slacks.

11.2 Major Iterations

The basic structure of the SQP algorithm involves

major and

minor iterations. The major iterations generate a sequence of iterates

that satisfy the linear constraints and converge to a point that satisfies the nonlinear constraints and the first-order conditions for optimality. At each iterate

a QP subproblem is used to generate a search direction towards the next iterate

. The constraints of the subproblem are formed from the linear constraints

and the linearized constraint

where

denotes the

Jacobian matrix, whose elements are the first derivatives of

evaluated at

. The QP constraints therefore comprise the

linear constraints

where

and

are bounded above and below by

and

as before. If the

matrix

and

-vector

are defined as

then the QP subproblem can be written as

where

is a quadratic approximation to a modified Lagrangian function (see

Gill et al. (2002)). The matrix

is a quasi-Newton approximation to the Hessian of the Lagrangian. A BGFS update is applied after each major iteration. If some of the variables enter the Lagrangian linearly the Hessian will have some zero rows and columns. If the nonlinear variables appear first, then only the leading

rows and columns of the Hessian need to be approximated.

11.3 Minor Iterations

Solving the QP subproblem is itself an iterative procedure. Here, the iterations of the QP solver

nag_opt_sparse_convex_qp_solve (e04nqc) form the

minor iterations of the SQP method.

nag_opt_sparse_convex_qp_solve (e04nqc) uses a reduced-Hessian active-set method implemented as a reduced-gradient method. At each minor iteration, the constraints

are partitioned into the form

where the

basis matrix is square and nonsingular, and the matrices

and

are the remaining columns of

. The vectors

,

and

are the associated

basic,

superbasic and

nonbasic variables respectively; they are a permutation of the elements of

and

. At a QP subproblem, the basic and superbasic variables will lie somewhere between their bounds, while the nonbasic variables will normally be equal to one of their bounds. At each iteration,

is regarded as a set of independent variables that are free to move in any desired direction, namely one that will improve the value of the QP objective (or the sum of infeasibilities). The basic variables are then adjusted in order to ensure that

continues to satisfy

. The number of superbasic variables (

, say) therefore indicates the number of degrees of freedom remaining after the constraints have been satisfied. In broad terms,

is a measure of

how nonlinear the problem is. In particular,

will always be zero for LP problems.

If it appears that no improvement can be made with the current definition of , and , a nonbasic variable is selected to be added to , and the process is repeated with the value of increased by one. At all stages, if a basic or superbasic variable encounters one of its bounds, the variable is made nonbasic and the value of is decreased by one.

Associated with each of the equality constraints are the dual variables . Similarly, each variable in has an associated reduced gradient . The reduced gradients for the variables are the quantities , where is the gradient of the QP objective, and the reduced gradients for the slacks are the dual variables . The QP subproblem is optimal if for all nonbasic variables at their lower bounds, for all nonbasic variables at their upper bounds, and for other variables, including superbasics. In practice, an approximate QP solution is found by relaxing these conditions.

11.4 The Merit Function

After a QP subproblem has been solved, new estimates of the solution are computed using a linesearch on the augmented Lagrangian merit function

where

is a diagonal matrix of penalty arguments

, and

now refers to dual variables for the nonlinear constraints in

(1). If

denotes the current solution estimate and

denotes the QP solution, the linesearch determines a step

such that the new point

gives a

sufficient decrease in the merit function

. When necessary, the penalties in

are increased by the minimum-norm perturbation that ensures descent for

(see

Gill et al. (1992)). The value of

is adjusted to minimize the merit function as a function of

before the solution of the QP subproblem (see

Gill et al. (1986) and

Eldersveld (1991)).

11.5 Treatment of Constraint Infeasibilities

nag_opt_nlp_solve (e04wdc) makes explicit allowance for infeasible constraints. First, infeasible

linear constraints are detected by solving the linear program

where

is a vector of ones, and the nonlinear constraint bounds are temporarily excluded from

and

. This is equivalent to minimizing the sum of the general linear constraint violations subject to the bounds on

. (The sum is the

-norm of the linear constraint violations. In the linear programming literature, the approach is called

elastic programming.)

The linear constraints are infeasible if the optimal solution of

(10) has

or

. nag_opt_nlp_solve (e04wdc) then terminates without computing the nonlinear functions.

Otherwise, all subsequent iterates satisfy the linear constraints. (Such a strategy allows linear constraints to be used to define a region in which the functions can be safely evaluated.) nag_opt_nlp_solve (e04wdc) proceeds to solve nonlinear problems as given, using search directions obtained from the sequence of QP subproblems (see

(6)).

If a QP subproblem proves to be infeasible or unbounded (or if the dual variables

for the nonlinear constraints become large), nag_opt_nlp_solve (e04wdc) enters ‘elastic’ mode and thereafter solves the problem

where

is a non-negative argument (the

elastic weight), and

is called a

composite objective (the

penalty function for the nonlinear constraints).

The value of may increase automatically by multiples of if the optimal and continue to be nonzero. If is sufficiently large, this is equivalent to minimizing the sum of the nonlinear constraint violations subject to the linear constraints and bounds.

The initial value of is controlled by the optional argument .

12 Optional Arguments

Several optional arguments in nag_opt_nlp_solve (e04wdc) define choices in the problem specification or the algorithm logic. In order to reduce the number of formal arguments of nag_opt_nlp_solve (e04wdc) these optional arguments have associated default values that are appropriate for most problems. Therefore, you need only specify those optional arguments whose values are to be different from their default values.

The remainder of this section can be skipped if you wish to use the default values for all optional arguments.

The following is a list of the optional arguments available. A full description of each optional argument is provided in

Section 12.1.

Optional arguments may be specified by calling one, or more, of the functions

nag_opt_nlp_option_set_file (e04wec),

nag_opt_nlp_option_set_string (e04wfc) and

nag_opt_nlp_option_set_integer (e04wgc)

before a call to nag_opt_nlp_solve (e04wdc).

nag_opt_nlp_option_set_file (e04wec) reads options from an external options file, with

Begin and

End as the first and last lines respectively and each intermediate line defining a single optional argument. For example,

Begin

Print Level = 5

End

The call

nag_opt_nlp_option_set_file(ioptns, &state, &fail);

can then be used to read the file on

the descriptor

ioptns as returned by a call of

nag_open_file (x04acc).

NE_NOERROR

on successful exit.

nag_opt_nlp_option_set_file (e04wec) should be consulted for a full description of this method of supplying optional arguments.

All optional arguments not specified by you are set to their default values. Optional arguments specified by you are unaltered by nag_opt_nlp_solve (e04wdc) (unless they define invalid values) and so remain in effect for subsequent calls to nag_opt_nlp_solve (e04wdc), unless altered by you.

12.1 Description of the Optional Arguments

For each option, we give a summary line, a description of the optional argument and details of constraints.

The summary line contains:

- the keywords, where the minimum abbreviation of each keyword is underlined (if no characters of an optional qualifier are underlined, the qualifier may be omitted);

- a parameter value,

where the letters , denote options that take character, integer and real values respectively;

- the default value, where the symbol is a generic notation for machine precision (see nag_machine_precision (X02AJC)), and denotes the relative precision of the objective function , and signifies the value of .

Keywords and character values are case and white space insensitive.

Optional arguments used to specify files (e.g., optional arguments

and

) have type Nag_FileID (see

Section 3.2.1.1 in the Essential Introduction). This ID value must either be set to

(the default value) in which case there will be no output, or will be as returned by a call of

nag_open_file (x04acc).

| Central Difference Interval | | Default |

When , the central-difference interval is used near an optimal solution to obtain more accurate (but more expensive) estimates of gradients. Twice as many function evaluations are required compared to forward differencing. The interval used for the th variable is . The resulting derivative estimates should be accurate to , unless the functions are badly scaled.

If you supply a value for this optional parameter, a small value between and is appropriate.

| Check Frequency | | Default |

Every th minor iteration after the most recent basis factorization, a numerical test is made to see if the current solution satisfies the general linear constraints (the linear constraints and the linearized nonlinear constraints, if any). The constraints are of the form , where is the set of slack variables. To perform the numerical test, the residual vector is computed. If the largest component of is judged to be too large, the current basis is refactorized and the basic variables are recomputed to satisfy the general constraints more accurately. If , the value of is used and effectively no checks are made.

is useful for debugging purposes, but otherwise this option should not be needed.

This option controls the specification of the initial working set in the procedure for finding a feasible point for the linear constraints and bounds and in the first QP subproblem thereafter. With a , the first working set is chosen by nag_opt_nlp_solve (e04wdc) based on the values of the variables and constraints at the initial point. Broadly speaking, the initial working set will include equality constraints and bounds or inequality constraints that violate or ‘nearly’ satisfy their bounds (to within ).

With a

, you must set the

istate array and define

clamda and

h as discussed in

Section 5.

istate values associated with bounds and linear constraints determine the initial working set of the procedure to find a feasible point with respect to the bounds and linear constraints.

istate values associated with nonlinear constraints determine the initial working set of the first QP subproblem after such a feasible point has been found. nag_opt_nlp_solve (e04wdc) will override your specification of

istate if necessary, so that a poor choice of the working set will not cause a fatal error. For instance, any elements of

istate which are set to

,

will be reset to zero, as will any elements which are set to

when the corresponding elements of

bl and

bu are not equal. A warm start will be advantageous if a good estimate of the initial working set is available – for example, when nag_opt_nlp_solve (e04wdc) is called repeatedly to solve related problems.

| Crash Tolerance | | Default |

If a

is specified, an internal Crash procedure is used to select an initial basis from certain rows and columns of the constraint matrix

. The optional argument

determines which rows and columns of

are eligible initially, and how many times the Crash procedure is called. Columns of

are used to pad the basis where necessary.

|

Meaning |

|

The initial basis contains only slack variables: . |

|

The Crash procedure is called once, looking for a triangular basis in all rows and columns of . |

|

The Crash procedure is called twice (if there are nonlinear constraints). The first call looks for a triangular basis in linear rows, and the iteration proceeds with simplex iterations until the linear constraints are satisfied. The Jacobian is then evaluated for the first major iteration and the Crash procedure is called again to find a triangular basis in the nonlinear rows (retaining the current basis for linear rows). |

|

The Crash procedure is called up to three times (if there are nonlinear constraints). The first two calls treat linear equalities and linear inequalities separately. As before, the last call treats nonlinear rows before the first major iteration. |

If , certain slacks on inequality rows are selected for the basis first. (If , numerical values are used to exclude slacks that are close to a bound). The Crash procedure then makes several passes through the columns of , searching for a basis matrix that is essentially triangular. A column is assigned to ‘pivot’ on a particular row if the column contains a suitably large element in a row that has not yet been assigned. (The pivot elements ultimately form the diagonals of the triangular basis.) For remaining unassigned rows, slack variables are inserted to complete the basis.

The allows the starting Crash procedure to ignore certain ‘small’ nonzeros in each column of . If is the largest element in column , other nonzeros of in the columns are ignored if . (To be meaningful, must be in the range .)

When , the basis obtained by the Crash procedure may not be strictly triangular, but it is likely to be nonsingular and almost triangular. The intention is to obtain a starting basis containing more columns of and fewer (arbitrary) slacks. A feasible solution may be reached sooner on some problems.

For example, suppose the first columns of form the matrix shown under ; i.e., a tridiagonal matrix with entries , , . To help the Crash procedure choose all columns for the initial basis, we would specify a of for some value of .

This special keyword may be used to reset all optional arguments to their default values.

| Derivative Level | | Default |

Optional argument

specifies which nonlinear function gradients are known analytically and will be supplied to nag_opt_nlp_solve (e04wdc) by user-supplied functions

objfun and

confun.

|

Meaning |

|

All objective and constraint gradients are known. |

|

All constraint gradients are known, but some or all components of the objective gradient are unknown. |

|

The objective gradient is known, but some or all of the constraint gradients are unknown. |

|

Some components of the objective gradient are unknown and some of the constraint gradients are unknown. |

The value should be used whenever possible. It is the most reliable and will usually be the most efficient.

If

or

, nag_opt_nlp_solve (e04wdc) will

estimate the missing components of the objective gradient, using finite differences. This may simplify the coding of

objfun. However, it could increase the total run-time substantially (since a special call to

objfun is required for each missing element), and there is less assurance that an acceptable solution will be located. If the nonlinear variables are not well scaled, it may be necessary to specify a non-default optional argument

.

If

or

, nag_opt_nlp_solve (e04wdc) will estimate missing elements of the Jacobian. For each column of the Jacobian, one call to

confun is needed to estimate all missing elements in that column, if any.

At times, central differences are used rather than forward differences. (This is not under your control.)

| Derivative Linesearch | | Default |

At each major iteration a linesearch is used to improve the merit function. Optional argument uses safeguarded cubic interpolation and requires both function and gradient values to compute estimates of the step . If some analytic derivatives are not provided, or optional argument is specified, nag_opt_nlp_solve (e04wdc) employs a linesearch based upon safeguarded quadratic interpolation, which does not require gradient evaluations.

A nonderivative linesearch can be slightly less robust on difficult problems, and it is recommended that the default be used if the functions and derivatives can be computed at approximately the same cost. If the gradients are very expensive relative to the functions, a nonderivative linesearch may give a significant decrease in computation time.

If

is selected, nag_opt_nlp_solve (e04wdc) signals the evaluation of the linesearch by calling

objfun with

. If the potential saving provided by a nonderivative linesearch is to be realised, it is essential that

objfun be coded so that derivatives are not computed when

.

| Difference Interval | | Default |

This alters the interval

used to estimate gradients by forward differences. It does so in the following circumstances:

| – |

in the interval (‘cheap’) phase of verifying the problem derivatives; |

| – |

for verifying the problem derivatives; |

| – |

for estimating missing derivatives. |

In all cases, a derivative with respect to is estimated by perturbing that component of to the value , and then evaluating or at the perturbed point. The resulting gradient estimates should be accurate to unless the functions are badly scaled. Judicious alteration of may sometimes lead to greater accuracy.

If you supply a value for this optional parameter, a small value between and is appropriate.

(See

Section 3.2.1.1 in the Essential Introduction for further information on NAG data types.)

Optional arguments

and

are similar to optional arguments

and

, but they record solution information in a manner that is more direct and more easily modified. A full description of information recorded in optional arguments

and

is given in

Gill et al. (2005a).

If , the last solution obtained will be output to the file associated with ID .

If , the file associated with ID ,

containing basis information, will be read. The file will usually have been output previously as a . The file will not be accessed if optional arguments or are specified.

| Elastic Weight | | Default |

This keyword determines the initial weight

associated with the problem

(11) (see

Section 11.5).

At major iteration , if elastic mode has not yet started, a scale factor is defined from the current objective gradient. Elastic mode is then started if the QP subproblem is infeasible, or the QP dual variables are larger in magnitude than . The QP is resolved in elastic mode with .

Thereafter, major iterations continue in elastic mode until they converge to a point that is optimal for

(11) (see

Section 11.5). If the point is feasible for equation

(1) , it is declared locally optimal. Otherwise,

is increased by a factor of

and major iterations continue. If

has already reached a maximum allowable value, equation

(1) is declared locally infeasible.

| Expand Frequency | | Default |

This option is part of the anti-cycling procedure designed to make progress even on highly degenerate problems.

For linear models, the strategy is to force a positive step at every iteration, at the expense of violating the bounds on the variables by a small amount. Suppose that the optional argument is . Over a period of iterations, the tolerance actually used by nag_opt_nlp_solve (e04wdc) increases from to (in steps of ).

For nonlinear models, the same procedure is used for iterations in which there is only one superbasic variable. (Cycling can occur only when the current solution is at a vertex of the feasible region.) Thus, zero steps are allowed if there is more than one superbasic variable, but otherwise positive steps are enforced.

Increasing helps reduce the number of slightly infeasible nonbasic variables (most of which are eliminated during a resetting procedure). However, it also diminishes the freedom to choose a large pivot element (see optional argument ).

| Factorization Frequency | | Default |

At most basis changes will occur between factorizations of the basis matrix.

With linear programs, the basis factors are usually updated every iteration. The default is reasonable for typical problems. Higher values up to (say) may be more efficient on well-scaled problems.

When the objective function is nonlinear, fewer basis updates will occur as an optimum is approached. The number of iterations between basis factorizations will therefore increase. During these iterations a test is made regularly (according to the optional argument ) to ensure that the general constraints are satisfied. If necessary the basis will be refactorized before the limit of updates is reached.

| Function Precision | | Default |

The relative function precision is intended to be a measure of the relative accuracy with which the functions can be computed. For example, if is computed as for some relevant and if the first significant digits are known to be correct, the appropriate value for would be .

(Ideally the functions or should have magnitude of order . If all functions are substantially less than in magnitude, should be the absolute precision. For example, if at some point and if the first significant digits are known to be correct, the appropriate value for would be .)

The default value of is appropriate for simple analytic functions.

In some cases the function values will be the result of extensive computation, possibly involving a costly iterative procedure that can provide few digits of precision. Specifying an appropriate may lead to savings, by allowing the linesearch procedure to terminate when the difference between function values along the search direction becomes as small as the absolute error in the values.

| Hessian Full Memory | | Default if |

| Hessian Limited Memory | | Default if |

These options select the method for storing and updating the approximate Hessian. (nag_opt_nlp_solve (e04wdc) uses a quasi-Newton approximation to the Hessian of the Lagrangian. A BFGS update is applied after each major iteration.)

If is specified, the approximate Hessian is treated as a dense matrix and the BFGS updates are applied explicitly. This option is most efficient when the number of variables is not too large (say, less than ). In this case, the storage requirement is fixed and one can expect -step Q-superlinear convergence to the solution.

should be used on problems where is very large. In this case a limited-memory procedure is used to update a diagonal Hessian approximation a limited number of times. (Updates are accumulated as a list of vector pairs. They are discarded at regular intervals after has been reset to their diagonal.)

| Hessian Frequency | | Default |

If optional argument is in effect and BFGS updates have already been carried out, the Hessian approximation is reset to the identity matrix. (For certain problems, occasional resets may improve convergence, but in general they should not be necessary.)

and have a similar effect to and (except that the latter retains the current diagonal during resets).

| Hessian Updates | | Default if , otherwise |

If optional argument is in effect and BFGS updates have already been carried out, all but the diagonal elements of the accumulated updates are discarded and the updating process starts again.

Broadly speaking, the more updates stored, the better the quality of the approximate Hessian. However, the more vectors stored, the greater the cost of each QP iteration. The default value is likely to give a robust algorithm without significant expense, but faster convergence can sometimes be obtained with significantly fewer updates (e.g., ).

| Infinite Bound Size | | Default |

If , defines the ‘infinite’ bound in the definition of the problem constraints. Any upper bound greater than or equal to will be regarded as (and similarly any lower bound less than or equal to will be regarded as ). If , the default value is used.

| Iterations Limit | | Default |

The value of specifies the maximum number of minor iterations allowed (i.e., iterations of the simplex method or the QP algorithm), summed over all major iterations. (See also the description of the optional argument .)

| Linesearch Tolerance | | Default |

This tolerance, , controls the accuracy with which a step length will be located along the direction of search each iteration. At the start of each linesearch a target directional derivative for the merit function is identified. This argument determines the accuracy to which this target value is approximated, and it must be a value in the range .

The default value requests just moderate accuracy in the linesearch.

If the nonlinear functions are cheap to evaluate, a more accurate search may be appropriate; try .

If the nonlinear functions are expensive to evaluate, a less accurate search may be appropriate. If all gradients are known, try . (The number of major iterations might increase, but the total number of function evaluations may decrease enough to compensate.)

If not all gradients are known, a moderately accurate search remains appropriate. Each search will require only –5 function values (typically), but many function calls will then be needed to estimate missing gradients for the next iteration.

For nag_opt_nlp_solve (e04wdc), normally each optional argument specification is printed as it is supplied. Optional argument may be used to suppress the printing and optional argument may be used to turn on printing.

| LU Density Tolerance | | Default |

| LU Singularity Tolerance | | Default |

The density tolerance, , is used during factorization of the basis matrix . Columns of and rows of are formed one at a time, and the remaining rows and columns of the basis are altered appropriately. At any stage, if the density of the remaining matrix exceeds , the Markowitz strategy for choosing pivots is terminated, and the remaining matrix is factored by a dense procedure. Raising the density tolerance towards may give slightly sparser factors, with a slight increase in factorization time.

The singularity tolerance, , helps guard against ill-conditioned basis matrices. After is refactorized, the diagonal elements of are tested as follows: if or , the th column of the basis is replaced by the corresponding slack variable. (This is most likely to occur after a restart.)

| LU Factor Tolerance | | Default |

| LU Update Tolerance | | Default |

The values of

and

affect the stability of the basis factorization

, during refactorization and updates respectively. The lower triangular matrix

is a product of matrices of the form

where the multipliers

will satisfy

. The default values of

and

usually strike a good compromise between stability and sparsity. They must satisfy

,

.

For large and relatively dense problems, (say) may give a useful improvement in stability without impairing sparsity to a serious degree.

For certain very regular structures (e.g., band matrices) it may be necessary to reduce

in order to achieve stability. For example, if the columns of

include a sub-matrix of the form

one should set both

and

to values in the range

.

| LU Partial Pivoting | | Default |

The factorization implements a Markowitz-type search for pivots that locally minimize the fill-in subject to a threshold pivoting stability criterion. The default option is to use threshhold partial pivoting. The optional arguments and are more expensive than partial pivoting but are more stable and better at revealing rank, as long as is not too large (say ). When numerical difficulties are encountered, nag_opt_nlp_solve (e04wdc) automatically reduces the tolerance towards and switches (if necessary) to rook or complete pivoting, before reverting to the default or specified options at the next refactorization (with , relevant messages are output to the ).

| Major Feasibility Tolerance | | Default |

This tolerance, , specifies how accurately the nonlinear constraints should be satisfied. The default value is appropriate when the linear and nonlinear constraints contain data to about that accuracy.

Let

be the maximum nonlinear constraint violation, normalized by the size of the solution, which is required to satisfy

where

is the violation of the

th nonlinear constraint

.

In the major iteration log (see

Section 13.2,

appears as the quantity labelled ‘Feasible’. If some of the problem functions are known to be of low accuracy, a larger

may be appropriate.

| Major Optimality Tolerance | | Default |

This tolerance,

, specifies the final accuracy of the dual variables. On successful termination, nag_opt_nlp_solve (e04wdc) will have computed a solution

such that

where

is an estimate of the complementarity slackness for variable

where

. The values

are computed from the final QP solution using the reduced gradients

(where

is the

th component of the objective gradient,

is the associated column of the constraint matrix

, and

is the set of QP dual variables):

In the , appears as the quantity labelled ‘Optimal’.

| Major Iterations Limit | | Default |

This is the maximum number of major iterations allowed. It is intended to guard against an excessive number of linearizations of the constraints. If , optimality and feasibility are checked.

| Major Print Level | | Default |

This controls the amount of output to the optional arguments and at each major iteration. suppresses most output, except for error messages. gives normal output for linear and nonlinear problems, and gives additional details of the Jacobian factorization that commences each major iteration.

In general, the value being specified may be thought of as a binary number of the form

where each letter stands for a digit that is either

or

as follows:

|

a single line that gives a summary of each major iteration. (This entry in is not strictly binary since the summary line is printed whenever ); |

|

basis statistics, i.e., information relating to the basis matrix whenever it is refactorized. (This output is always provided if ); |

|

, the nonlinear variables involved in the objective function or the constraints. These appear under the heading ‘Jacobian variables’; |

|

, the dual variables for the nonlinear constraints. These appear under the heading ‘Multiplier estimates’; |

|

, the values of the nonlinear constraint functions; |

|

, the Jacobian matrix. This appears under the heading ‘ and Jacobian’. |

To obtain output of any items , set the corresponding digit to , otherwise to .

If

, the Jacobian matrix will be output column-wise at the start of each major iteration. Column

will be preceded by the value of the corresponding variable

and a key to indicate whether the variable is basic, superbasic or nonbasic. (Hence if

, there is no reason to specify

unless the objective contains more nonlinear variables than the Jacobian.) A typical line of output is

3 1.250000e+01 BS 1 1.00000e+00 4 2.00000e+00

which would mean that

is basic at value

, and the third column of the Jacobian has elements of

and

in rows

and

.

| Major Step Limit | | Default |

This argument limits the change in

during a linesearch. It applies to all nonlinear problems, once a ‘feasible solution’ or ‘feasible subproblem’ has been found. A linesearch determines a step

over the range

, where

is

if there are nonlinear constraints, or is the step to the nearest upper or lower bound on

if all the constraints are linear. Normally, the first step length tried is

.

| 1. |

In some cases, such as or , even a moderate change in the components of can lead to floating-point overflow. The argument is therefore used to define a limit (where is the search direction), and the first evaluation of is at the potentially smaller step length . |

| 2. |

Wherever possible, upper and lower bounds on should be used to prevent evaluation of nonlinear functions at meaningless points. The optional argument provides an additional safeguard. The default value should not affect progress on well behaved problems, but setting may be helpful when rapidly varying functions are present. A ‘good’ starting point may be required. An important application is to the class of nonlinear least squares problems. |

| 3. |

In cases where several local optima exist, specifying a small value for may help locate an optimum near the starting point. |

The keywords and specify the required direction of optimization. It applies to both linear and nonlinear terms in the objective.

The keyword means ‘Ignore the objective function, while finding a feasible point for the linear and nonlinear constraints’. It can be used to check that the nonlinear constraints are feasible without altering the call to nag_opt_nlp_solve (e04wdc).

| Minor Feasibility Tolerance | | |

| Feasibility Tolerance | | Default |

nag_opt_nlp_solve (e04wdc) tries to ensure that all variables eventually satisfy their upper and lower bounds to within this tolerance, . This includes slack variables. Hence, general linear constraints should also be satisfied to within .

Feasibility with respect to nonlinear constraints is judged by the optional argument (not by ).

If the bounds and linear constraints cannot be satisfied to within , the problem is declared infeasible. If

the corresponding sum of infeasibilities

is quite small, it may be appropriate to raise by a factor of or . Otherwise, some error in the data should be suspected.

Nonlinear functions will be evaluated only at points that satisfy the bounds and linear constraints. If there are regions where a function is undefined, every attempt should be made to eliminate these regions from the problem.

For example, if , it is essential to place lower bounds on both variables. If , the bounds and might be appropriate. (The log singularity is more serious. In general, keep as far away from singularities as possible.)

If , feasibility is defined in terms of the scaled problem (since it is then more likely to be meaningful).

In reality, nag_opt_nlp_solve (e04wdc) uses as a feasibility tolerance for satisfying the bounds on and in each QP subproblem. If the sum of infeasibilities cannot be reduced to zero, the QP subproblem is declared infeasible. nag_opt_nlp_solve (e04wdc) is then in elastic mode thereafter (with only the linearized nonlinear constraints defined to be elastic). See the description of the optional argument .

| Minor Iterations Limit | | Default |

If the number of minor iterations for the optimality phase of the QP subproblem exceeds , then all nonbasic QP variables that have not yet moved are frozen at their current values and the reduced QP is solved to optimality.

Note that more than minor iterations may be necessary to solve the reduced QP to optimality. These extra iterations are necessary to ensure that the terminated point gives a suitable direction for the linesearch.

In the major iteration log (see

Section 13.2) a

t at the end of a line indicates that the corresponding QP was artificially terminated using the limit

.

Compare with the optional argument , which defines an independent absolute limit on the total number of minor iterations (summed over all QP subproblems).

| Minor Print Level | | Default |

This controls the amount of output to the

and the

during solution of the QP subproblems. The value of

has the following effect:

|

Output |

|

No minor iteration output except error messages. |

|

A single line of output at each minor iteration (controlled by optional arguments and . |

|

Basis factorization statistics generated during the periodic refactorization of the basis (see the optional argument ). Statistics for the first factorization each major iteration are controlled by the optional argument . |

| New Basis File | | Default |

| Backup Basis File | | Default |

| Save Frequency | | Default |

(See

Section 3.2.1.1 in the Essential Introduction for further information on NAG data types.)

and are sometimes referred to as basis maps. They contain the most compact representation of the state of each variable. They are intended for restarting the solution of a problem at a point that was reached by an earlier run. For nontrivial problems, it is advisable to save basis maps at the end of a run, in order to restart the run if necessary.

If ,

a basis map will be saved on the

every th iteration. The first record of the file will contain the word PROCEEDING if the run is still in progress. A basis map will also be saved at the end of a run, with some other word indicating the final solution status.

If , it

is intended as a safeguard against losing the results of a long run. Suppose that a is being saved every () iterations, and that nag_opt_nlp_solve (e04wdc) is about to save such a basis at iteration . It is conceivable that the run may be interrupted during the next few milliseconds (in the middle of the save). In this case the Basis file will be corrupted and the run will have been essentially wasted.

To eliminate this risk, both a

and a

may be

specified using calls of

nag_open_file (x04acc).

The current basis will then be saved every iterations, first on the

and then immediately on the

.

If the run is interrupted at iteration during the save on the

,

there will still be a usable basis on the

(corresponding to iteration ).

Note that a new basis will be saved in at the end of a run if it terminates normally, but it will not be saved in . In the above example, if an optimum solution is found at iteration (or if the iteration limit is ), the final basis in the

will correspond to iteration , but the last basis saved in the

will be the one for iteration .

A full description of information recorded in

and

is given in

Gill et al. (2005a).

| New Superbasics Limit | | Default |

This option causes early termination of the QP subproblems if the number of free variables has increased significantly since the first feasible point. If the number of new superbasics is greater than , the nonbasic variables that have not yet moved are frozen and the resulting smaller QP is solved to optimality.

In the major iteration log (see

Section 13.1), a

t at the end of a line indicates that the QP was terminated early in this way.

| Old Basis File | | Default |

(See

Section 3.2.1.1 in the Essential Introduction for further information on NAG data types.)

If

, the basis maps information will be obtained from the file associated with ID

.

The file will usually have been output previously as a

or

.

A full description of information recorded in

and

is given in

Gill et al. (2005a).

The file will not be acceptable if the number of rows or columns in the problem has been altered.

This argument is recommended for large problems that have significantly more variables than constraints. It reduces the work required for each ‘pricing’ operation (where a nonbasic variable is selected to become superbasic). When , all columns of the constraint matrix are searched. Otherwise, and are partitioned to give roughly equal segments and , for . If the previous pricing search was successful on and , the next search begins on the segments and . (All subscripts here are modulo .) If a reduced gradient is found that is larger than some dynamic tolerance, the variable with the largest such reduced gradient (of appropriate sign) is selected to become superbasic. If nothing is found, the search continues on the next segments and , and so on.

For time-stage models having time periods, (or or ) may be appropriate.

| Pivot Tolerance | | Default |

During the solution of QP subproblems, the pivot tolerance is used to prevent columns entering the basis if they would cause the basis to become almost singular.